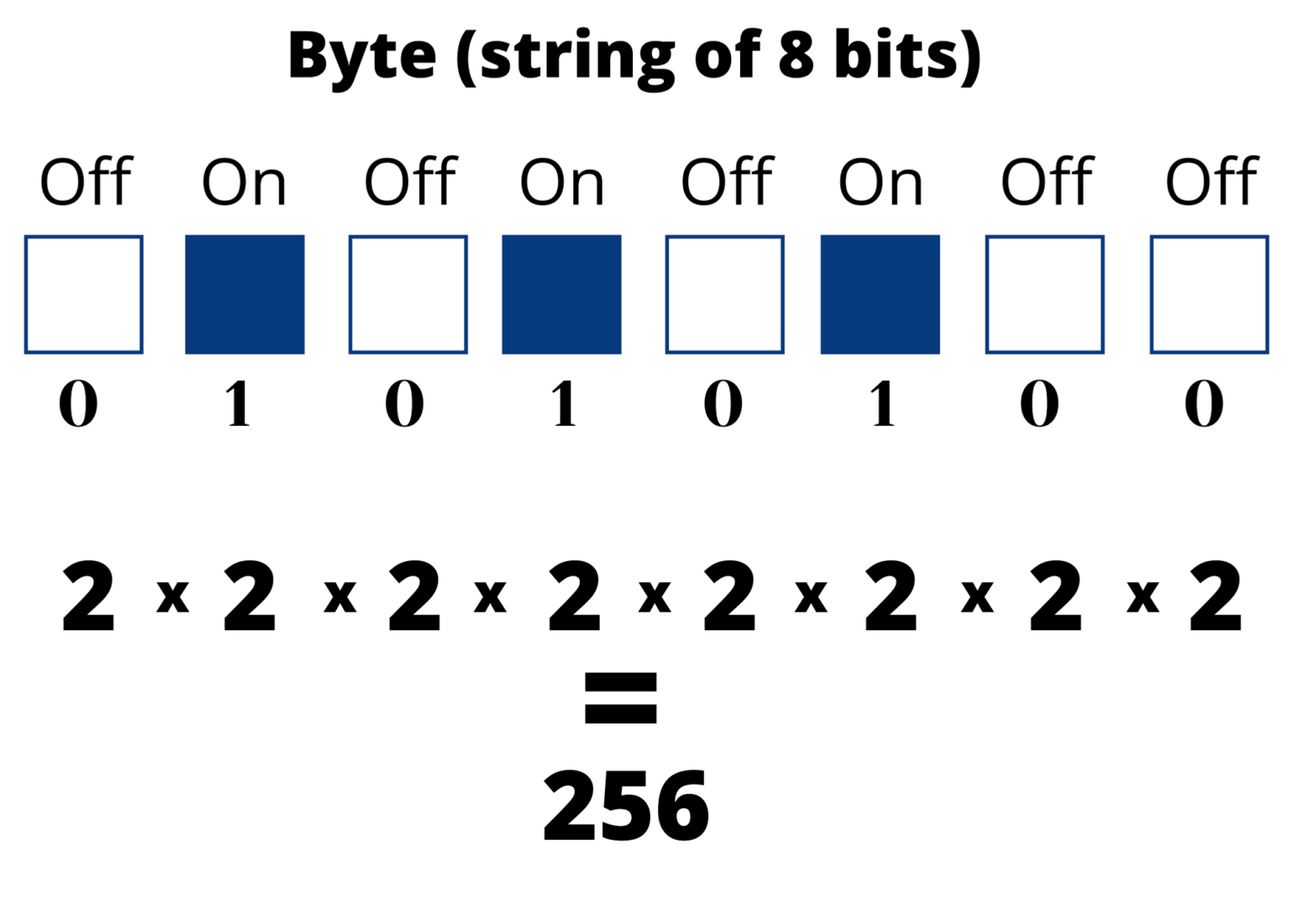

As the chip was delayed and did not meet CTC's performance goals, the 2200 ended up using CTC's own TTL-based CPU instead. Originally known as the 1201, the chip was commissioned by Computer Terminal Corporation (CTC) to implement an instruction set of their design for their Datapoint 2200 programmable terminal. It is an 8-bit CPU with an external 14-bit address bus that could address 16 KB of memory. The Intel 8008 ("eight-thousand-eight" or "eighty-oh-eight") is an early byte-oriented microprocessor designed by Computer Terminal Corporation (CTC), implemented and manufactured by Intel, and introduced in April 1972. It initially sold for less than one-sixth the cost of competing designs from larger companies, such as the 6800 or Intel 8080. (demo) How many different patterns can be made with 1, 2, or 3 bits 3 bits vs. When it was introduced in 1975, the 6502 was the least expensive microprocessor on the market by a considerable margin. The design team had formerly worked at Motorola on the Motorola 6800 project the 6502 is essentially a simplified, less expensive and faster version of that design. You can also represent more complex information with bytes than you can with. The MOS Technology 6502 (typically pronounced "sixty-five-oh-two" or "six-five-oh-two") is an 8-bit microprocessor that was designed by a small team led by Chuck Peddle for MOS Technology. The biggest number you can make with a byte is 255, which in binary is 11111111, because it’s 128+64+32+16+8+4+2+1. These have a 53-bit mantissa which should mean that any integer value with a magnitude of approximately 9 quadrillion or less - more specifically, 9,007,199,254,740,991 - will be represented accurately. Subreddit logo is under an open source license from, found here All numbers in JavaScript are actually IEEE-754 compliant floating-point doubles.Rulesįor more detailed descriptions of these rules, please visit the rules page Related subreddits This subreddit is dedicated to such Computer Science topics like algorithms, computation, theory of languages, theory of programming, some software engineering, AI, cryptography, information theory, computer architecture etc.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed